Credit: Google

Even if you don’t know much about the inner workings of generative AI models, you probably know they need a lot of memory. Hence, it is currently almost impossible to buy a measly stick of RAM without getting fleeced. Google Research recently revealed TurboQuant, a compression algorithm that reduces the memory footprint of large language models (LLMs) while also boosting speed and maintaining accuracy.

TurboQuant is aimed at reducing the size of the key-value cache, which Google likens to a “digital cheat sheet” that stores important information so it doesn’t have to be recomputed. This cheat sheet is necessary because, as we say all the time, LLMs don’t actually know anything; they can do a good impression of knowing things through the use of vectors, which map the semantic meaning of tokenized text. When two vectors are similar, that means they have conceptual similarity.

High-dimensional vectors, which can have hundreds or thousands of embeddings, may describe complex information like the pixels in an image or a large data set. They also occupy a lot of memory and inflate the size of the key-value cache, bottlenecking performance. To make models smaller and more efficient, developers employ quantization techniques to run them at lower precision. The drawback is that the outputs get worse—the quality of token estimation goes down. With TurboQuant, Google’s early results show an 8x performance increase and 6x reduction in memory usage in some tests without a loss of quality.

Angles and errors

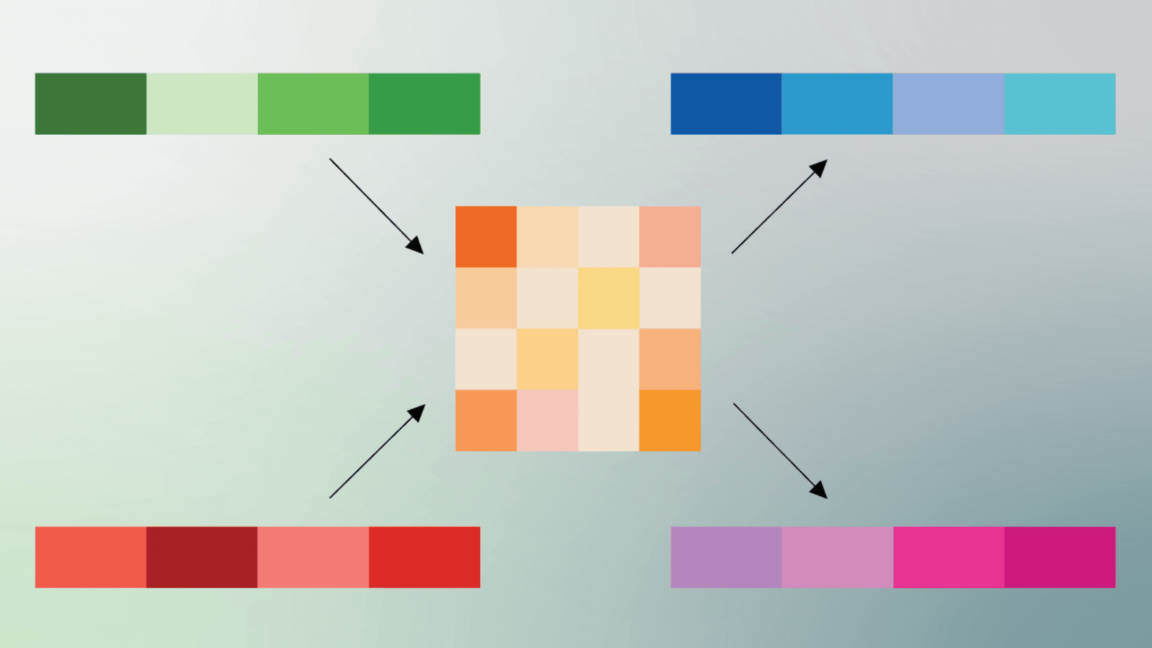

Applying TurboQuant to an AI model is a two-step process. To achieve high-quality compression, Google has devised a system called PolarQuant. Usually, vectors in AI models are encoded using standard XYZ coordinates, but PolarQuant converts vectors into polar coordinates in a Cartesian system. On this circular grid, the vectors are reduced to two pieces of information: a radius (core data strength) and a direction (the data’s meaning).

PolarQuant acts as a high-efficiency compression bridge, converting Cartesian inputs into a compact Polar “shorthand” for storage and processing.PolarQuant acts as a high-efficiency compression bridge, converting Cartesian inputs into a compact Polar “shorthand” for storage and processing.

Google offers an interesting real-world analogy to explain this process. The vector coordinates are like directions, so the traditional encoding might be “Go 3 blocks East, 4 blocks North.” But using Cartesian coordinates, it’s simply “Go 5 blocks at 37-degrees.” This takes up less space and saves the system from performing expensive data normalization steps.

PolarQuant is doing most of the compression, but the second step cleans up the rough spots. While PolarQuant is effective, it can create residual errors. Google proposes smoothing that out with a technique called Quantized Johnson-Lindenstrauss (QJL). This applies a 1-bit error-correction layer to the model, reducing each vector to a single bit (+1 or -1) while preserving the essential vector data that describes relationships. The result is a more accurate attention score—that’s the fundamental process by which neural networks decide what data is important. If you’re interested in more detail, the pre-print paper is available for download.

TurboQuant illustrates a substantial performance increase in computing attention logits within the key-value cache across various bit-width levels, measured relative to the highly optimized JAX baseline.

Credit: Google

So does all this math work? Google says it tested the new algorithmic compression across a suite of long-context benchmarks using both Gemma and Mistral open models. TurboQuant apparently had perfect downstream results in all tests while reducing memory usage in the key-value cache by 6x. The algorithm can quantize the cache to just 3 bits with no additional training, so it can be applied to existing models. Computing the attention score with 4-bit TurboQuant is also 8x faster compared to 32-bit unquantized keys on Nvidia H100 accelerators.

If implemented, TurboQuant could make AI models less expensive to run and less hungry for memory. However, the companies creating this technology could also use the newly freed-up memory to run more complex models. It’ll probably be a mix of both, but mobile AI could see more benefit. With the hardware limitations of a smartphone, compression techniques like TurboQuant could improve the quality of outputs without sending your data to the cloud.

-

C114 Communication Network

C114 Communication Network -

Communication Home

Communication Home