Artificial intelligence company Tripo AI has introduced Tripo P1.0, a production-grade native 3D diffusion architecture designed to generate engine-ready assets directly in three-dimensional space. The company recently demonstrated the technology during the Game Developers Conference 2026, where developers and industry professionals were able to see the system in action.

Generative AI has advanced rapidly across creative industries, but producing usable 3D assets has remained a difficult challenge. Many existing AI tools can generate 3D models, yet those models often require significant manual editing before they can be used in professional pipelines.

Developers and artists frequently need to repair geometry, rebuild topology, or optimize meshes before assets can be deployed in real-time engines such as Unity and Unreal Engine. These extra steps can slow production workflows, particularly in game development and interactive media.

Tripo AI says its new Tripo P1.0 architecture was designed to address that problem by generating assets that can move directly into development pipelines without additional reconstruction.

The HD Mesh generated by Tripo AI

Unlike traditional approaches that construct 3D geometry step by step, Tripo P1.0 uses a unified probabilistic spatial framework that resolves the overall structure of an object holistically. By modeling the global form of an asset at once, the system aims to produce cleaner topology, stable geometry, and consistent structures suitable for real-time applications.

According to the company, the system can generate production-ready assets in as little as two seconds, potentially accelerating the process of creating interactive content.

During its demonstrations at the conference in San Francisco, Tripo AI briefly showcased how its models generate assets through text prompts and reference images, as well as how those assets can be iterated quickly within real-time development environments.

Alongside P1.0, the company also introduced Tripo H3.1, a high-fidelity generative model focused on improving visual accuracy and structural precision. The model includes upgrades to geometry quality, texture detail, and generation speed, supporting the creation of more complex assets such as characters, mechanical structures, and detailed objects.

Tripo AI is also exploring Tripo W1.0, an early research initiative aimed at developing world models capable of understanding and generating entire 3D environments.

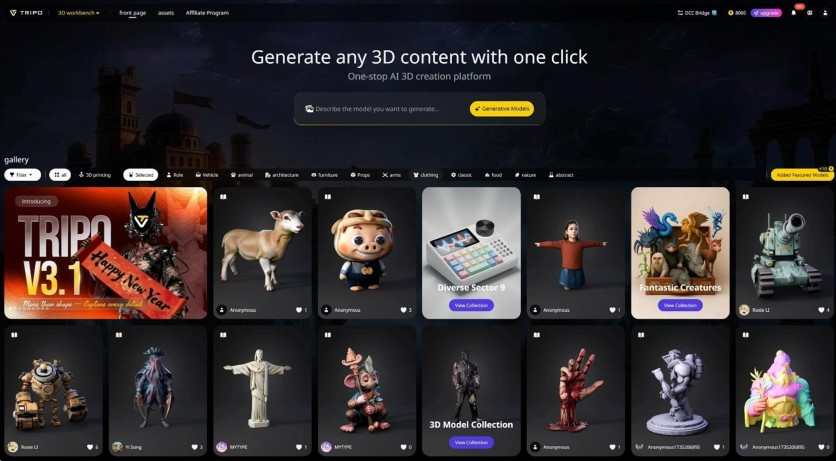

The company's ecosystem has grown significantly alongside the broader rise of AI-assisted content creation. Tripo AI reports that its platform now supports more than 6.5 million creators and 90,000 developers, with nearly 100 million 3D models generated using its tools.

Part of this ecosystem is Tripo Game Hub, a platform where developers experiment with AI-generated assets to create interactive experiences. The hub currently includes more than 100,000 active developers and over 2,000 AI-powered interactive projects.

Tripo AI website interface and models

According to founder and CEO Simon Song, the company's focus is on making AI-generated assets immediately usable in real production environments.

"The real shift happens when AI-generated 3D assets require no reconstruction before entering production workflows," Song said. "P1.0 is built around that idea—not just assisting existing pipelines, but becoming part of them."

As demand for scalable digital environments grows across industries such as gaming, virtual reality, and interactive entertainment, technologies like native 3D diffusion could play an increasing role in how 3D content is produced. Tripo AI believes tools capable of generating production-ready assets may help accelerate development and make interactive creation accessible to a broader community of creators.

-

C114 Communication Network

C114 Communication Network -

Communication Home

Communication Home