(Image credit: Tom's Hardware)

-

Facebook -

X -

-

-

Pinterest -

Flipboard -

Email

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Intel switched to a hybrid architecture for its CPUs back in 2021 with Alder Lake, mixing performance and efficiency cores on the same package, similar to ARM-based chips. Since then, the company has iterated on the design slowly but steadily. And even though a "unified core" is in the works, for now, the hybrid architecture seems to have reached maturity from a hardware standpoint, according to Intel VP Robert Hallock.

Hallock sat down with PC Games Hardware and, during the interview, blamed the software side of things for performance issues on hybrid chips. He was referring to the practice of disabling E-cores on modern Intel CPUs to get better performance; some people report higher FPS in games when playing only on the P-cores. Hallock's response to this was that "they are virtually identical in performance… it’s about 1% difference."

(Image credit: Tom's Hardware)

- CPU scaling with DLSS

- Ryzen to the top: How AMD innovated in the gaming CPU market

- How ARM is working its way into PCs

- AMD CES 2026 gaming trends press Q&A roundtable transcript

He went on to explain how the early state of the Intel Thread Director back in the day contributed to better P-core-only performance. Windows' task scheduler is essentially blind without the Thread Director actually telling it which process is better suited to which core (hence the name "director"). With better optimization, even though the E-cores aren't as powerful, they still contribute their fair share to the overall operation. Even simple tools like Intel's APO can help in this regard.

Article continues belowBeyond that, though, there were also issues with low ring bus frequencies with E-cores at the time that contributed to worse performance. With them enabled, even if your P-cores were ready to boost much higher, the interconnect would be bottlenecked to lower speeds because the E-cores on the same die just couldn't keep up. Intel has worked to more clearly decouple the core clusters in subsequent generations, like Raptor and Arrow Lake.

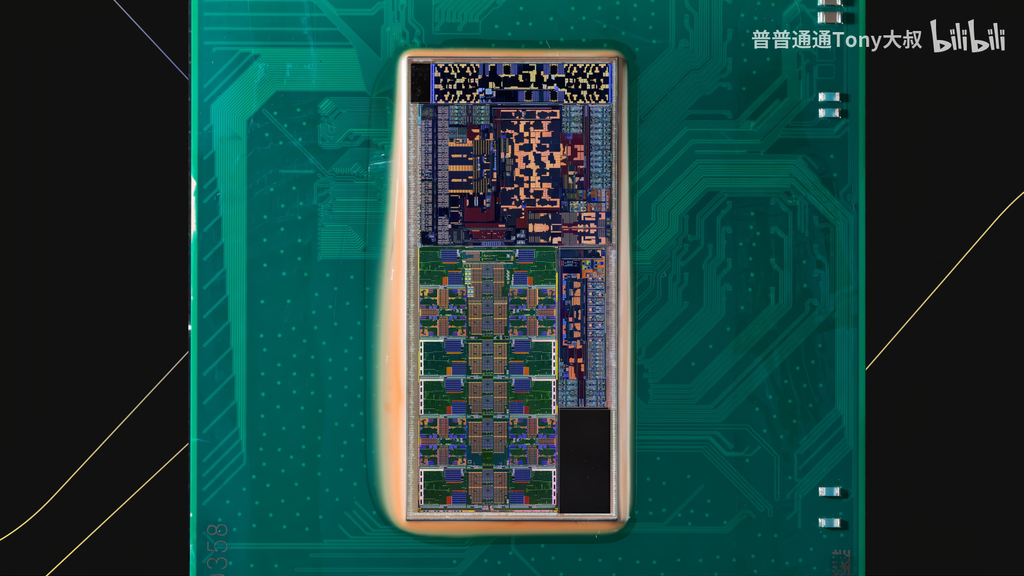

Arrow Lake 200S Die Shot (Image credit: Tony Yu from Asus, via Bilibili)

Hallock continued, saying that he truly believes "that the general PC gaming market and especially enthusiasts [...] are significantly underestimating the importance of software to the PC experience." Software optimization is the next frontier of efficiency, of extracting more performance from the same silicon, because the silicon itself is seemingly not the bottleneck.

Things like the new binary optimization feature Intel has packed inside the Arrow Lake refresh chips are an example of this. Even though it doesn't work for most apps and games yet — Geekbench even flagged it — it's proof that tuning code can lead to better performance on the same hardware. Beyond the program, everything from the driver to even the BIOS adds its own overhead, leaving performance on the table.

"Yes, you can make the game faster with a faster piece of hardware, but there's always going to be 10, 20, 30% performance hidden behind the fact that that game was just not optimized for your CPU," claimed Hallock. AMD's solution to this problem has been rather simple: just add a lot of SRAM next to the cores, aka 3D V-cache, so that the CPU's L3 cache needs are met quickly, helping achieve higher FPS in games.

Nova Lake has something similar in the works with its bLLC (Big Last Level Cache), but that's still a hardware solution. The thought of up to 30% performance just waiting to be extracted through better software optimization is therefore not overstated. In a way, Hallock is pointing fingers at developers and engineers who have optimized for AMD's relatively conventional silicon first, which affects the true potential of Intel's hybrid architecture.

Follow Tom's Hardware on Google News, or add us as a preferred source, to get our latest news, analysis, & reviews in your feeds.

-

C114 Communication Network

C114 Communication Network -

Communication Home

Communication Home